What is Feature Scaling?

Feature scaling is a technique used to standardize the range of independent variables or features of data. It is also known as data normalization or data standardization.

Why is important?

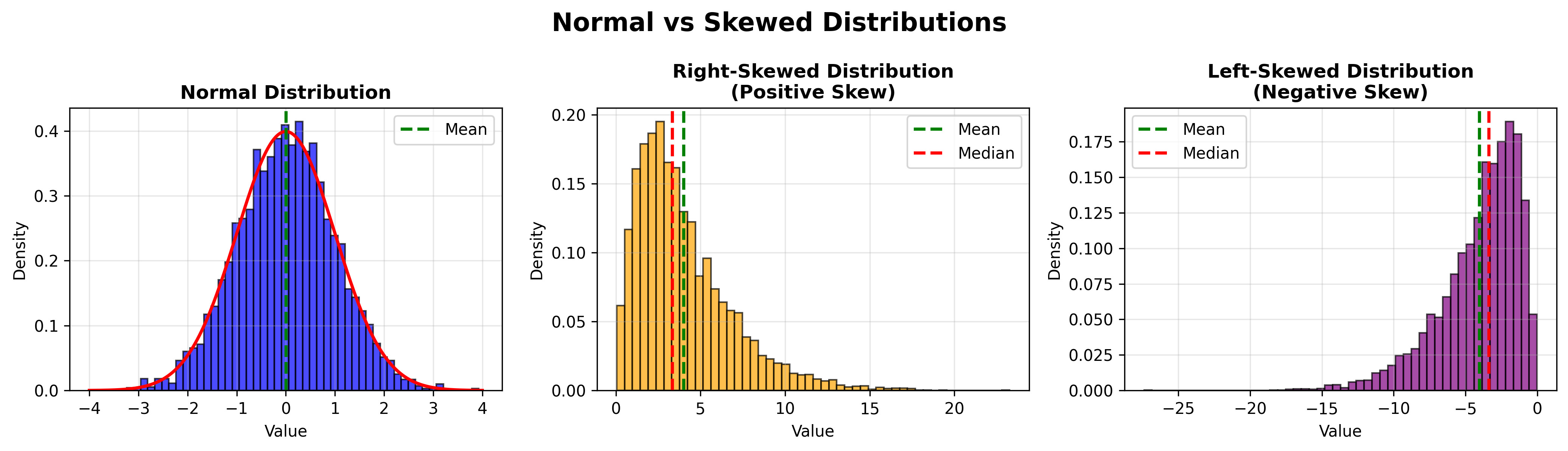

It is crucial because many machine learning algorithms perform better or converge faster when features are on a relatively similar scale and close to normally distributed.

Working with data that has vastly different scales, like age in years and income in dollars, can lead to biased results in algorithms that rely on distance calculations (e.g., k-nearest neighbors, k-means clustering) or gradient descent optimization (e.g., linear regression, logistic regression).

It also preserves the relationships between features while ensuring that no single feature dominates the learning process due to its scale.

Common Methods

You have different methods for feature scaling, but two of the most common are Min-Max Scaling and Standardization (Z-score Normalization).

Standardization (Z-score Normalization)

Standardization, also known as Z-score normalization, transforms your data so that it has a mean of 0 and a standard deviation of 1.

Let’s say you have a dataset with features like age (20-80) and income ($30k-$200k), as mentioned before. You want to standardize these features to ensure that they contribute equally to the analysis.

Here’s how you can do it in Python using pandas:

# data is your pandas DataFrame or Series

data_scaled = (data - data.mean()) / data.std()Mathematical explanation

The formula for calculating the Z-score of a value is:

Where:

zis the standardized valuexis the original valueμ(mu) is the mean of the featureσ(sigma) is the standard deviation of the feature

What this does

- Centering:

data - data.mean()shifts the data so that the new mean becomes 0 - Scaling: Dividing by

data.std()scales the data so that the new standard deviation becomes 1

After this transformation, the scaled data will have:

- Mean = 0: The average of all values becomes zero

- Standard Deviation = 1: The spread of the data is normalized

- Shape Preservation: The distribution shape remains the same, just relocated and rescaled

Example

If you have original values: [1, 2, 3, 4, 5]

- Mean (μ) = 3

- Standard deviation (σ) ≈ 1.58

After standardization (applying the formula to each value):

- (1-3)/1.58 ≈ -1.27

- (2-3)/1.58 ≈ -0.63

- (3-3)/1.58 = 0

- (4-3)/1.58 ≈ 0.63

- (5-3)/1.58 ≈ 1.27

So, instead of having values [1, 2, 3, 4, 5], you now have approximately [-1.27, -0.63, 0, 0.63, 1.27].